The Robotics Roundup is a weekly newspost going over some of the most exciting developments in robotics over the past week.

In today’s edition we have:

- Autonomous buses: It’s all about when, not how, they sound

- Delicate, diligent, transient

- AI-driven robots start hunting for novel materials without help from humans

- A Soft-Bodied Aerial Robot for Collision Resilience and Contact-Reactive Perching

- Quadrupeds Are Learning to Dribble, Catch, and Balance

Autonomous buses: It’s all about when, not how, they sound

A team of researchers from Cornell Bowers College of Computing and Information Science and Linköping University have been conducting a study to enable autonomous electric buses to better communicate with pedestrians and cyclists on the road. Standard vehicle noises, such as beeps and dings, worked to grab people’s attention and “The Wheels on the Bus” and a similar jingles were also found to be effective in signaling cyclists to clear out before the brakes engaged and also elicited smiles and waves from pedestrians. However, researchers found that the timing and duration of the sounds were most important in signaling the bus’s intentions. The researchers hope their approach to designing sound can be applied to any autonomous system or robot. They also emphasize the importance of understanding traffic as a social phenomenon and giving autonomous vehicles a better way to participate in the conversation.

Delicate, diligent, transient

Empa researchers at the Sustainability Robotics laboratory in Dübendorf are developing low-cost, sustainable sensors and flying devices using potatoes, wood waste, and dyer’s lichen. These bio-gliders are completely biodegradable with design inspired by the flying seeds of the Java cucumber. The researchers want to use the data from the smart sensors to monitor the condition of the forest soil and its biological and chemical balance. The transport vehicle for the biosensor is a glider whose material consists of conventional potato starch, with assembly being as simple as printing the material and pressing it into shape. Once these gliders have completed their data collection, they can be left in place and allowed to safely biodegrade without harming the environment.

AI-driven robots start hunting for novel materials without help from humans

The Lawrence Berkeley National Laboratory’s Materials Project has developed an AI-driven laboratory to synthesize new materials, which has already produced about 40 target materials in just a few months. The A-Lab system uses artificial intelligence to determine the best way to synthesize a particular material, then uses robotic arms to execute the synthesis using the systems nearly 200 different starting materials, while also adjusting different reaction conditions such as temperature, drying time, and gas composition. After analysis, the results are fed back into the Materials Project database for the human researchers. The system produces materials at a rate about 100 times faster than humans in a traditional laboratory.

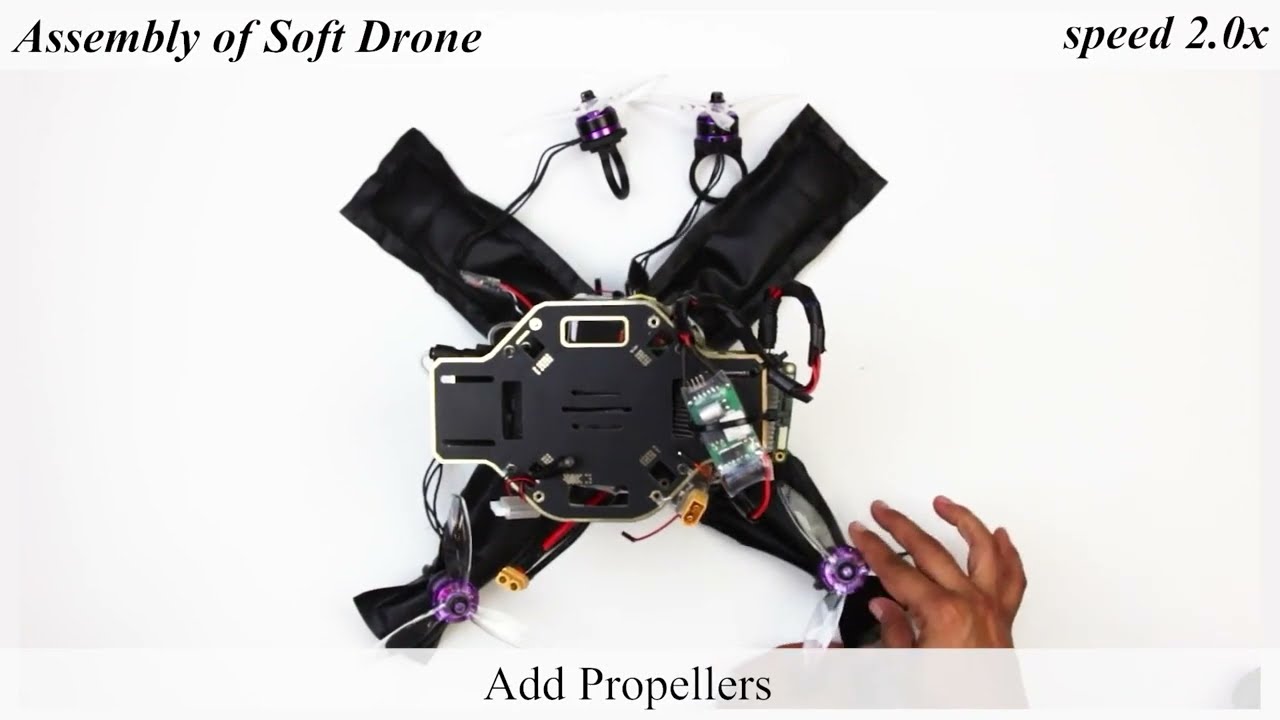

A Soft-Bodied Aerial Robot for Collision Resilience and Contact-Reactive Perching

SoBAR is a novel robotics platform capable of pneumatically varying its body stiffness to achieve intrinsic collision resilience. This robot aims to address the limited interaction capabilities of current aerial robots in unstructured environments, including their inability to tolerate collisions and to successfully land or perch on objects of unknown shapes, sizes, and textures. Unlike conventional rigid aerial robots, SoBAR can repeatedly endure and recover from collisions in various directions, due to it’s soft and deformable structure.

Quadrupeds Are Learning to Dribble, Catch, and Balance

Three interesting papers will be presented at the 2023 International Conference on Robotics and Automation (ICRA) documenting the development of some new and interesting capabilities of quadrupedal robot platforms: dribbling, catching, and traversing a balance beam. The first paper by MIT’s roboticists describes how they taught a quadruped to dribble a soccer ball across rough terrain. The second paper by the University of Zurich researchers explains how they trained an ANYmal-C quadruped to catch a ball thrown from 4 meters away and traveling at up to 15 meters per second using an event camera. The third paper by CMU researchers reports on how they trained a quadruped to traverse a balance beam using a combination of simulation and real-world experiments.