The Robotics Roundup is a weekly newspost going over some of the most exciting developments in robotics over the past week.

In today’s edition we have:

- Shock video shows Atlas robot training for automotive work

- One person can supervise ‘swarm’ of 100 unmanned autonomous vehicles, OSU research shows

- Building robots for “Zero Mass” space exploration

- The Uncanny Valley: Advancements And Anxieties Of AI That Mimics Life

- ChatGPT-Powered Robots Build Better Human Connections

Shock video shows Atlas robot training for automotive work

Boston Dynamics’ Atlas robot, the most advanced humanoid robot ever built, has demonstrated its capabilities in automotive work in a recent video. The automation of car manufacturing, which requires handling large volumes, heavy parts, and high precision tasks, is a fitting job for robots. While many robots already exist on manufacturing and assembly lines, Atlas’ demonstration opens up possibilities for humanoid robots in more chaotic and disorganised job roles. Despite being primarily a research platform, Atlas’ abilities and Hyundai’s 2020 acquisition of Boston Dynamics have sparked speculation about the robot’s direction towards commercial work. The video suggests that Atlas might be operating autonomously, but this has not been confirmed. Although Atlas is an extraordinary testbed and leader in its field, it is not designed for mass manufacturing as a commercial product. If humanoid robots were to be deployed at scale, a different design would likely be necessary.

One person can supervise ‘swarm’ of 100 unmanned autonomous vehicles, OSU research shows

A recent research project involving Oregon State University has shown that more than 100 autonomous ground and aerial robots, or a “swarm”, can be successfully managed by a single person without imposing significant workload. This development marks substantial progress towards the cost-effective and efficient use of swarms for various purposes, such as wildland firefighting, package delivery, and urban disaster response. The study, part of the Defense Advanced Research Project Agency’s Offensive Swarm-Enabled Tactics (OFFSET) program, involved deploying swarms of up to 250 autonomous vehicles in urban environments to collect information, potentially improving safety for troops and civilians. The project further developed systems, software, and user interfaces for a single operator, termed the “swarm commander”, to manage these autonomous units effectively.

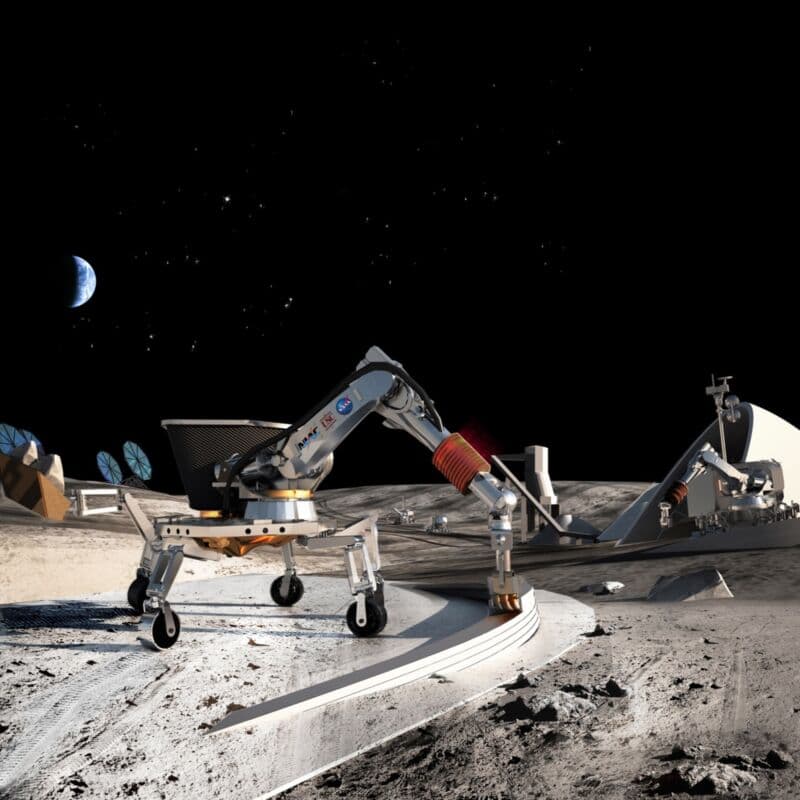

Building robots for “Zero Mass” space exploration

NASA Ames Research Center and Stanford University are experimenting with robots, advanced building materials, and algorithms for “zero mass exploration”, which involves self-replicating machines conceived by John von Neumann in the 1940s. The technology of reprogrammable metamaterials, or materials that can change their configuration autonomously, has advanced to where it could be used for space exploration. One key innovation includes the use of prefabricated “voxels”—standardized reconfigurable building blocks—to be assembled by robots. These voxels are made from an incredibly light carbon-fiber-reinforced polymer called StattechNN-40CF. In a lab experiment, three robots autonomously used all 256 voxels to assemble a shelter in four and a half days. The team is now focusing on demonstrating how their building blocks and robots could be used in building communication and solar towers on the Moon.

The Uncanny Valley: Advancements And Anxieties Of AI That Mimics Life

The concept of the “uncanny valley”, which refers to the discomfort people feel when presented with something that looks almost, but not exactly, human, is becoming increasingly relevant due to advances in lifelike robots, computer-generated (CG) characters, and digital avatars. While physically distinguishable robots like Ameca are still being developed, CG artificial humans and AI-generated synthetic human faces and voices are becoming startlingly realistic, potentially leading to more encounters with the uncanny valley phenomenon. There are concerns about the impact this could have on mental health and trust in technology. Overcoming this challenge is both a technical and psychological issue, requiring a blend of engineering and human skills. The development of ‘emotional AI’, which detects and responds appropriately to human emotional signals, and a shift towards viewing AI as an augmentation tool rather than a simulation, are two potential solutions.

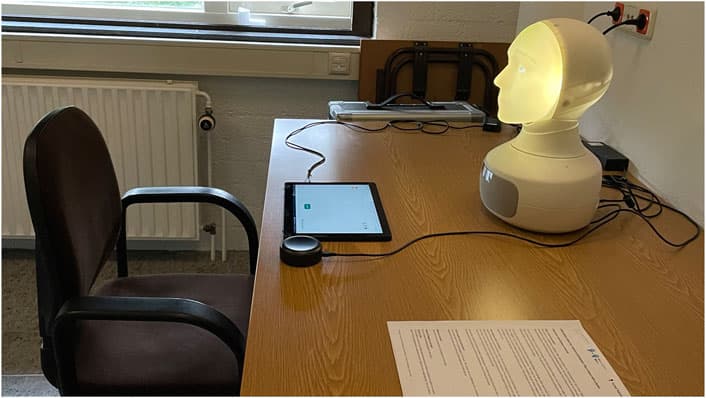

ChatGPT-Powered Robots Build Better Human Connections

A recent study from the Max Planck Institute for Psycholinguistics has shown that robots equipped with ChatGPT, which enables them to respond more expressively, are viewed as more likable, trustworthy, and capable of forming better connections. In a card sorting game where participants categorized images based on evoked emotions, robots employing ChatGPT provided feedback with facial expressions that matched, contrasted, or remained neutral to the predicted human emotions. The study found that participants had a more positive experience and performed better when the robot’s emotional responses were context-appropriate. This research could guide the development of future robots, particularly in service roles like therapy, companionship, and customer service, where accurate interpretation and appropriate response to human emotions is essential.