Happy 4th of July!!!

The Robotics Roundup is a weekly newspost going over some of the most exciting developments in robotics over the past week.

In today’s edition we have:

- Amazon’s New Robots Are Rolling Out an Automation Revolution

- The Creative Robot Contest for Decommissioning

- Caltech’s new ‘Morphobot’ is a little transforming robot that can walk, drive, and fly

- Pneumatic Actuators Give Robot Cheetah-Like Acceleration

- AnyTeleop Lets You Control Robot Arms and Hands From Anywhere with Just a Camera

Amazon’s New Robots Are Rolling Out an Automation Revolution

Amazon is deploying a new generation of robots in its fulfillment centers to increase automation. These robots have evolved significantly since the company’s acquisition of Kiva Systems in 2012. The current generation of robots, such as Hercules, Pegasus, and Proteus, perform various tasks in fulfillment centers, from lifting and moving shelves to picking and packing items. However, there are still tasks that require human dexterity and perception, such as retrieving small items from storage shelves. The introduction of newer, more capable robots could lead to a shift in the balance between automation and human workers in Amazon’s operations. While some jobs may be eliminated, the introduction of enhanced automation means that new roles in robot manufacturing and maintenance are emerging.

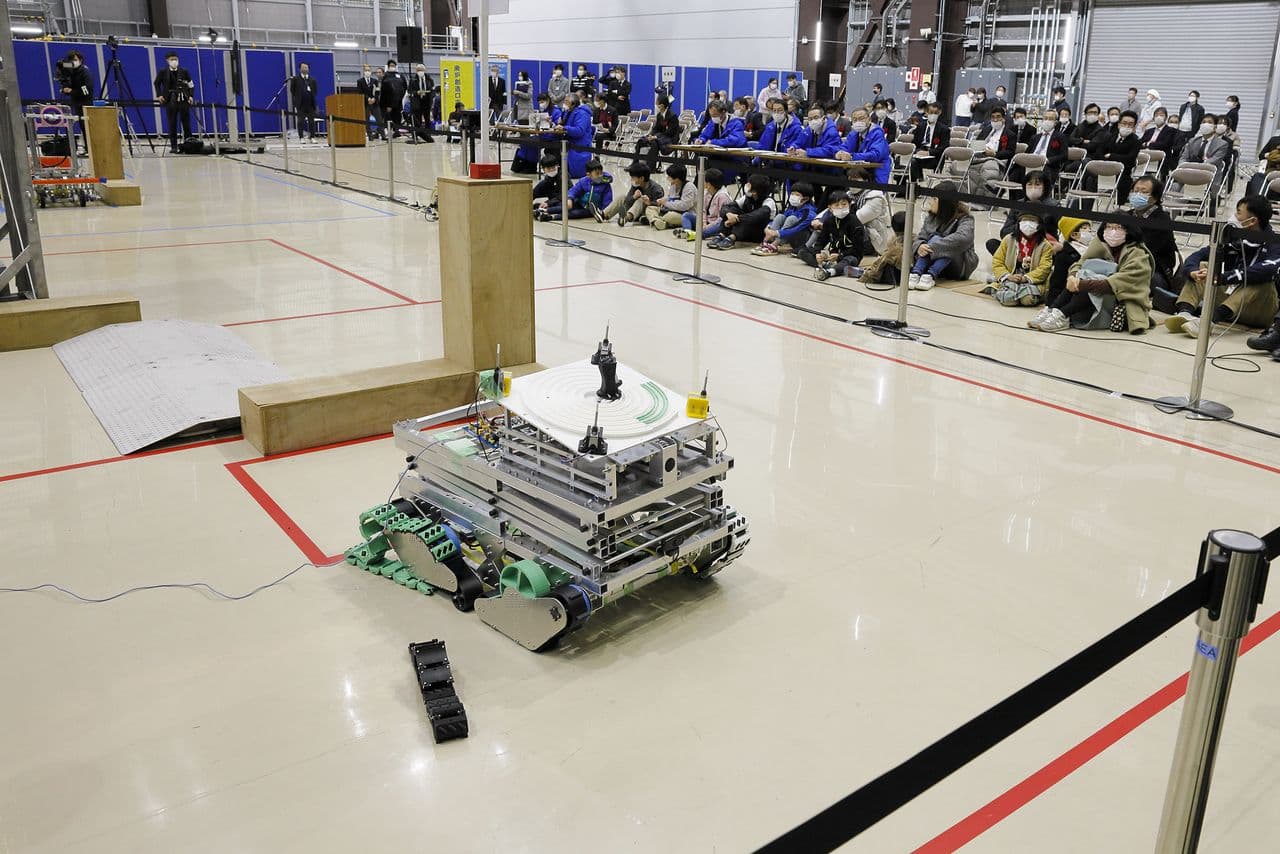

The Creative Robot Contest for Decommissioning

The Creative Robot Contest for Decommissioning is a yearly contest held by the Naraha Center for Remote Control Technology Development to encourage young engineering students to come up with innovative designs for robotics systems for the decommissioning of the Fukushima Daiichi Nuclear Power Station. The competition challenges specialized vocational high school students to maneuver their robots in a simulated reactor to complete certain tasks. Now in its tenth year, the contest is contributing to the education of the next generation of Japan’s engineers, as well as helping to develop a solution to the difficult problem of safe decommissioning of a nuclear accident site.

Caltech’s new ‘Morphobot’ is a little transforming robot that can walk, drive, and fly

Caltech has developed a robot called the M4 (Multi-Modal Mobility Morphobot), which can change its shape to drive, fly, and walk as needed. The M4 can quickly transition it’s large wheels to rotors for flying, and even stand on two wheels for better maneuverability. The robot autonomously decides it’s form using artificial intelligence to assess the best mode of navigation based on it’s surroundings and destination. The M4’s versatility could have applications in various fields, such as transporting injured individuals or exploring other planets, where having access to a wide variety of transportation modes available can greatly increase the available options for mission completion.

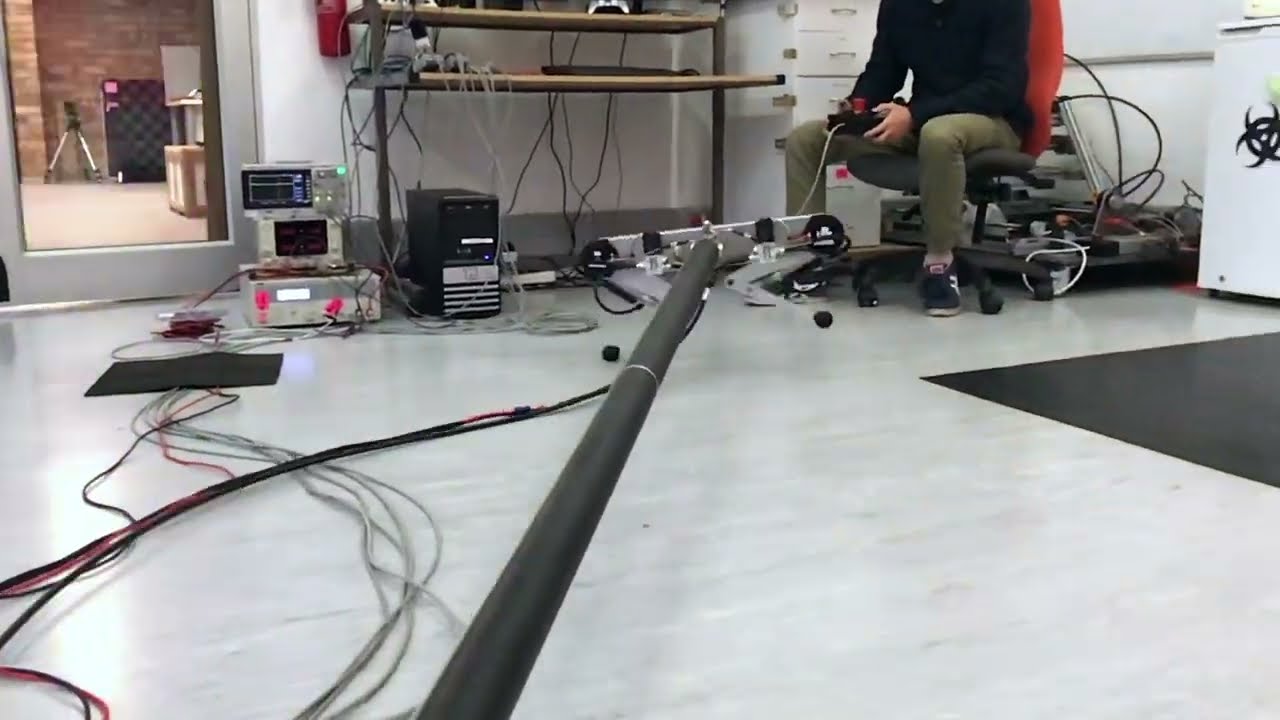

Pneumatic Actuators Give Robot Cheetah-Like Acceleration

Researchers at the University of Cape Town are exploring the use of pneumatic actuators in legged robots inspired by the high-speed maneuvering of cheetahs. They argue that pneumatics, which use gas as a working fluid instead of liquid, offer a high force-to-weight ratio and built-in compliance, making them a cost-effective alternative to hydraulics. While pneumatics are more difficult to control, the researchers suggest that fine force control may not be necessary for rapid maneuverability tasks. They have built a legged robot called Kemba that combines electric motors for precise control and pneumatic pistons for explosive actuation. With this approach, Kemba is capable of rapid acceleration and controlled locomotion, showing promise for future applications in biomechanics research and potentially lowering the cost of legged robots.

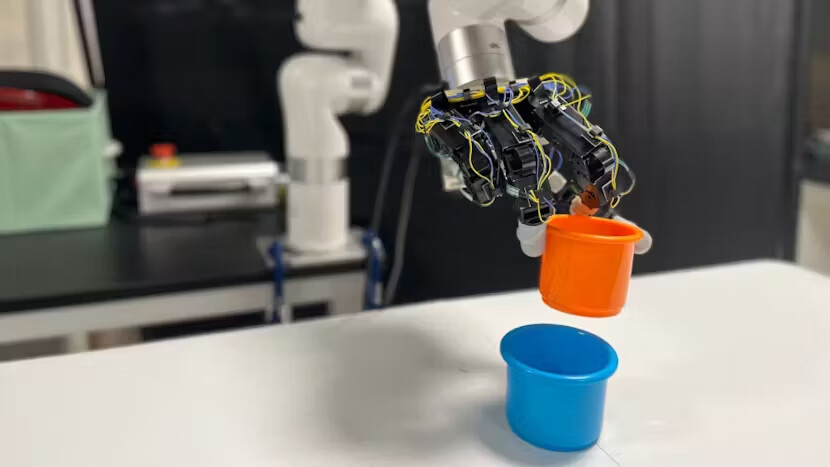

AnyTeleop Lets You Control Robot Arms and Hands From Anywhere with Just a Camera

Researchers from the University of California San Diego and NVIDIA have developed AnyTeleop, a vision-based system for high-dexterity teleoperation of robot arms and hands. AnyTeleop is a general system that can be used with any robot arm and hand combination, as well as any camera system. The researchers claim that AnyTeleop outperforms other systems optimized for specific combinations and offers low-cost and low-intrusion hand tracking using vision-based technology. The system does not require direct contact with the user, calibration, or reliance on depth data. It supports control of multiple robot hands or arms and collaborative control from multiple human operators. The researchers have demonstrated the performance and flexibility of AnyTeleop in both simulation and real-world scenarios, and their commitment to an open-source approach will encourage further research in teleoperation.