The Robotics Roundup is a weekly newspost going over some of the most exciting developments in robotics over the past week.

In today’s edition we have:

- These Robots Helped Understand How Insects Evolved Two Distinct Strategies of Flight

- How Nvidia became a major player in robotics

- Finger-shaped sensor enables more dexterous robots

- Disney’s super-cute new bipedal robot demonstrates the power of style

- This robot learns to clean your space just the way you like it

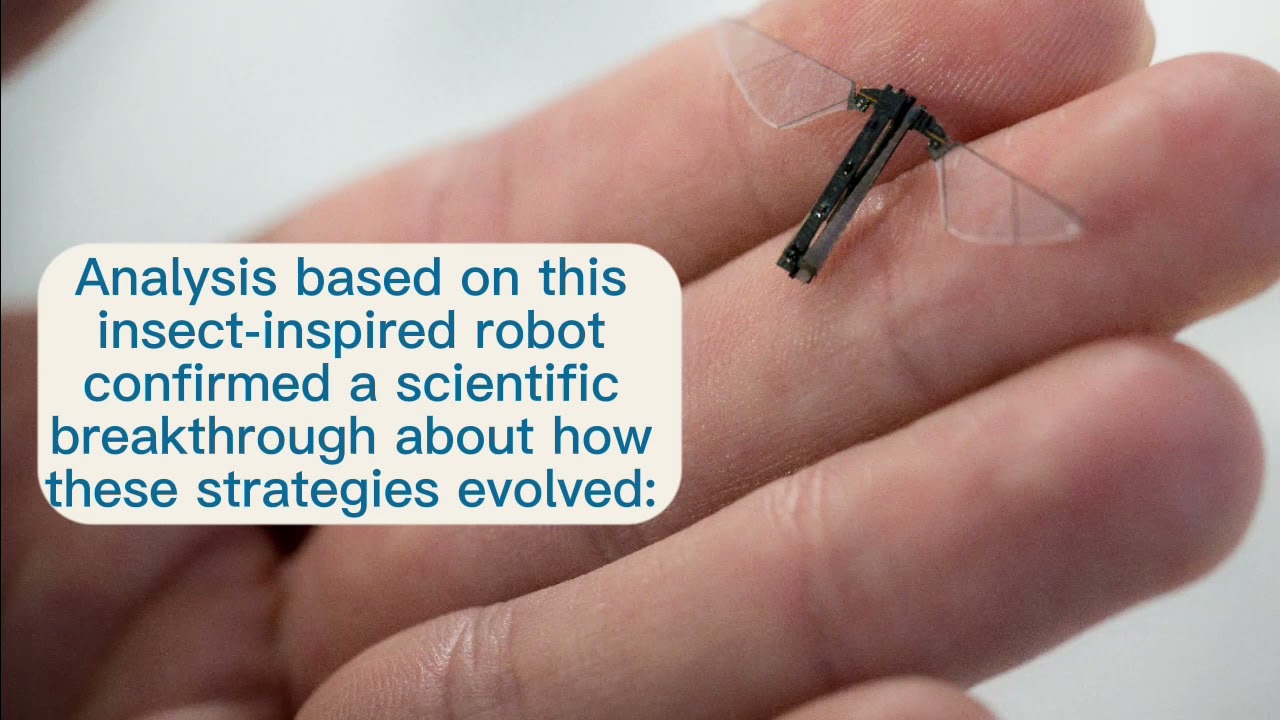

These Robots Helped Understand How Insects Evolved Two Distinct Strategies of Flight

A team of engineers from the University of California San Diego and biophysicists from the Georgia Institute of Technology have made a significant breakthrough in understanding insect flight evolution. Through a six-year collaboration, they found that asynchronous flight, where wing flapping is not controlled by the nervous system, actually evolved together in a common ancestor of insects. The researchers used robots to perform experiments and discovered that an insect can transition between synchronous and asynchronous flight modes gradually and smoothly. The study not only provides insights into the evolution of insect flight but also has implications for robotics in designing responsive and adaptive flapping wing systems.

How Nvidia became a major player in robotics

Nvidia’s robotics strategy has paid off in recent years as the company’s Jetson platform has gained popularity among developers. The platform has been widely adopted by a range of users, from hobbyists to multinational corporations, and has become a key tool for developing AI and ML applications in robotics. Nvidia’s expertise in silicon design and low-power systems, as well as its knowledge in gaming, has positioned the company well in the robotics space.

Finger-shaped sensor enables more dexterous robots

MIT researchers have developed a camera-based touch sensor called the GelSight Svelte that is long, curved, and shaped like a human finger. The sensor provides high-resolution tactile sensing over a large area, allowing a robotic hand to grasp objects using the entire sensing area of all three fingers. The sensor uses two mirrors to reflect and refract light so that one camera, located at the base of the sensor, can see along the entire length of the finger. The researchers have also built the sensor with a flexible backbone that can estimate the force being placed on the sensor by measuring how the backbone bends when the finger touches an object. The GelSight Svelte enables robots to perform various types of grasps, giving them more versatility in manipulation tasks.

Disney’s super-cute new bipedal robot demonstrates the power of style

Disney has unveiled a new robot at the IEEE IROS conference, inspired by the BD-1 robot from the Star Wars Jedi: Fallen Order video game. The robot is designed to mimic dog-like behaviors, with movements such as head tilting and rotating antennae. Disney is working on a long-term project to create robots with style and character, using a system that combines stylized movements designed by animators with machine reinforcement learning for motion control. This approach allows for expressive and unique gaits and body language in walking robots. While Disney has not disclosed specific plans for the use of these robots in its theme parks, this development is a step towards creating humanoid robots capable of communicating through body language and potentially eliciting genuine affection from humans.

This robot learns to clean your space just the way you like it

Researchers at Stanford University, in collaboration with other institutions, have developed TidyBot, a one-armed robot designed to clean and organize living spaces based on personalized preferences. TidyBot uses a large language model trained on internet data to identify categories of objects and can generalize cleaning instructions for similar items. The robot is equipped with cameras for object recognition and operates on powered wheels, with a seven-jointed arm for manipulating objects. While TidyBot achieves an 85% success rate in properly putting away objects, it still makes mistakes and struggles with unfamiliar spaces and difficult-to-grasp shapes. The researchers hope to improve the robot’s abilities and explore possibilities for multiple TidyBots collaborating on larger tasks.