The Robotics Roundup is a weekly newspost going over some of the most exciting developments in robotics over the past week.

In today’s edition we have:

- ‘Robotics is the future of food’: Artisan pizza restaurant Moto makes automation a key ingredient

- Fabric-Based Wearable Device Helps Users Navigate

- This AI-Powered Drone Demolished Human Pilots in a Race

- Dress Code for Robots

- Google DeepMind Scientists Teach Robots New Tricks with a “Language-to-Reward System”

‘Robotics is the future of food’: Artisan pizza restaurant Moto makes automation a key ingredient

Seattle-based pizza shop Moto Pizza is embracing robotics and automation to scale its business and meet increasing demand. Moto Pizza owner Lee Kindell is using a Picnic Pizza Station, a robotic pizza maker, to automate the pizza-making process. Kindell is also exploring the use of other tech solutions such as a robotic coffee barista, self-pour beverage systems, drone delivery, delivery robots, and vertical hydroponics for growing microgreens. Moto Pizza has experienced a surge in popularity, with a three-month waitlist for orders. Kindell believes that automation and artistry can coexist and plans to showcase the technology rather than hide it.

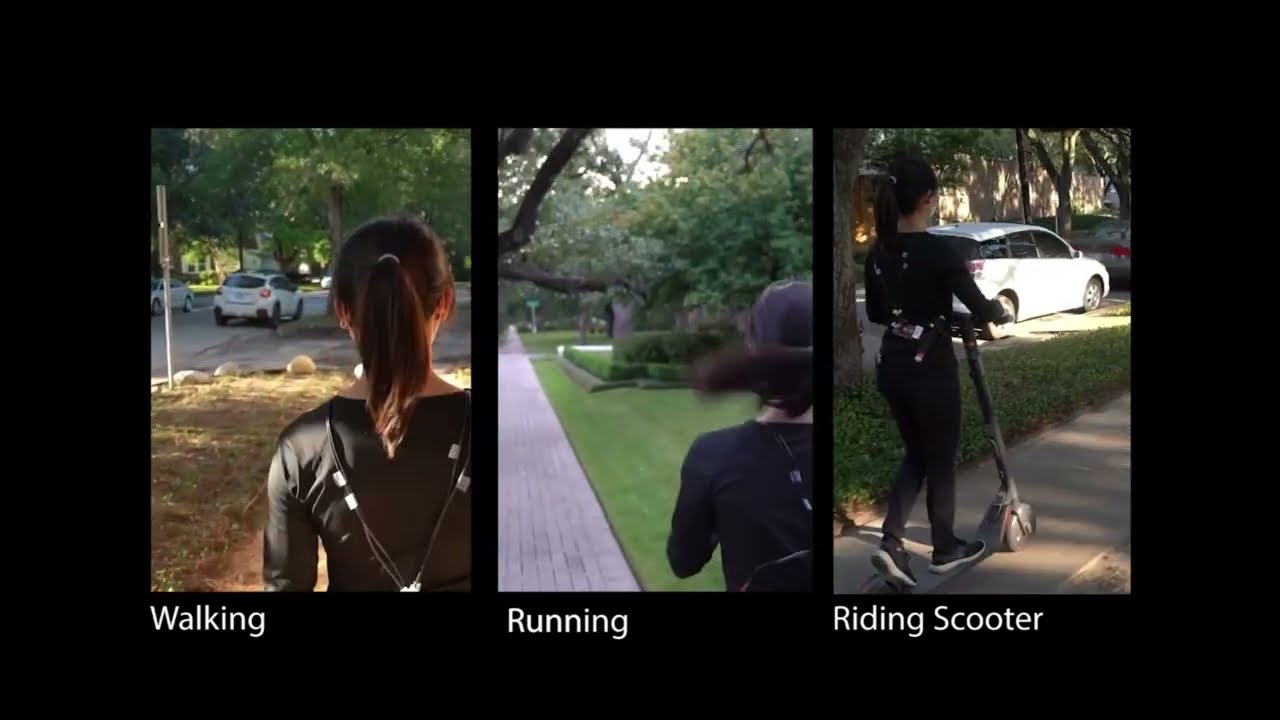

Fabric-Based Wearable Device Helps Users Navigate

Researchers at Rice University have developed a fabric-based wearable device that uses pressurized air to provide navigational cues to users. The device can be integrated into clothing or other wearables and is lightweight and compact. It could benefit individuals with prosthetic limbs, hearing loss, or those in professions such as pilots or surgeons. The wearable uses haptic cues to transmit information, which is less common than visual or auditory cues. The researchers conducted laboratory tests and real-world navigation tests, achieving high accuracy in delivering and interpreting the haptic cues. Further development aims to improve the device’s ability to convey more complex cues.

This AI-Powered Drone Demolished Human Pilots in a Race

Researchers at the University of Zurich have developed an AI system called Swift that can fly drones and beat human pilots in races. The system uses deep reinforcement learning to train the AI to behave in specific situations through trial and error. Using onboard sensors, the autonomous AI drone was able to defeat human pilots in 15 out of 25 races and achieved the fastest recorded race time on the track. While there are still limitations to the system, such as struggles with mistakes and changes in the physical environment, the research opens up possibilities for applications in autonomous vehicles, aircraft, and personal robots.

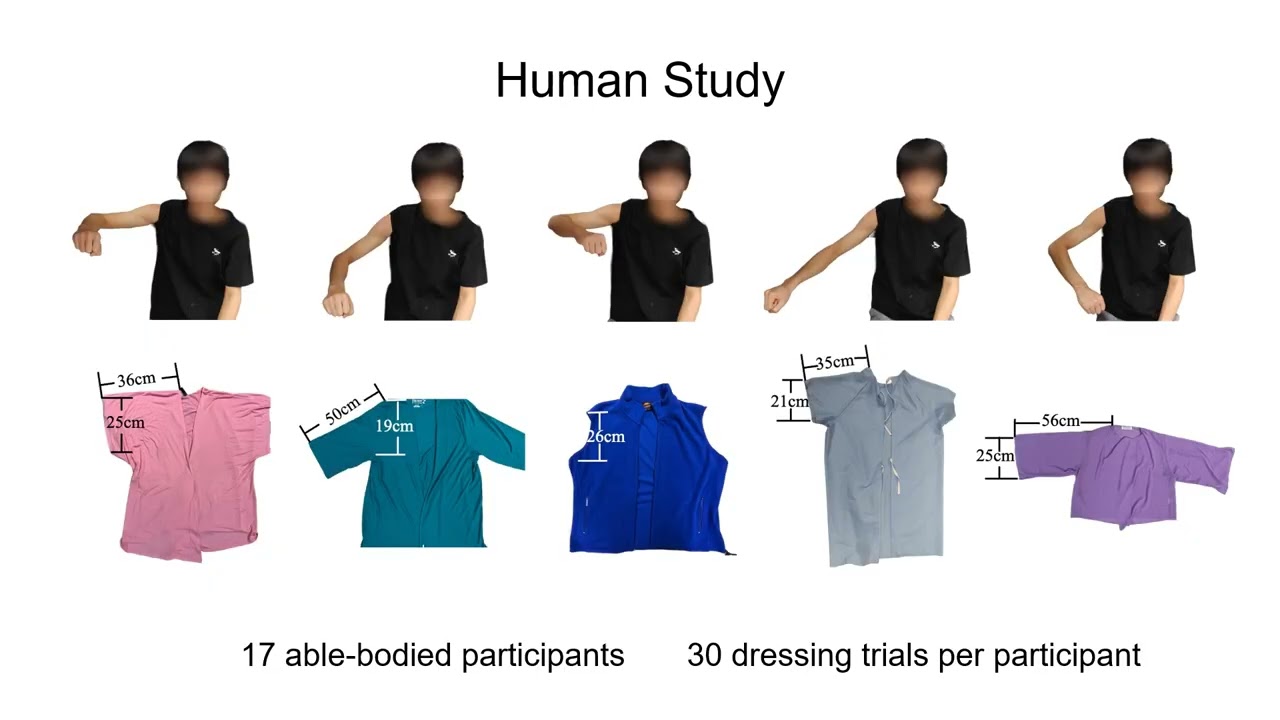

Dress Code for Robots

Researchers at Carnegie Mellon University have developed a robotic control system that uses machine learning to assist with dressing individuals. The system is designed to adapt to different types of clothing, poses, and body shapes. Using a reinforcement learning-based approach, the robot can experiment with various scenarios and teach itself the optimal way to dress someone. In initial tests, the robot successfully pulled a sleeve onto an individual’s arm in 86% of cases. The researchers plan to add more advanced functionalities, such as dressing both arms or pulling a t-shirt over the head, and making the process more dynamic to accommodate individuals in motion. This system could offer greater independence to those who struggle with dressing themselves.

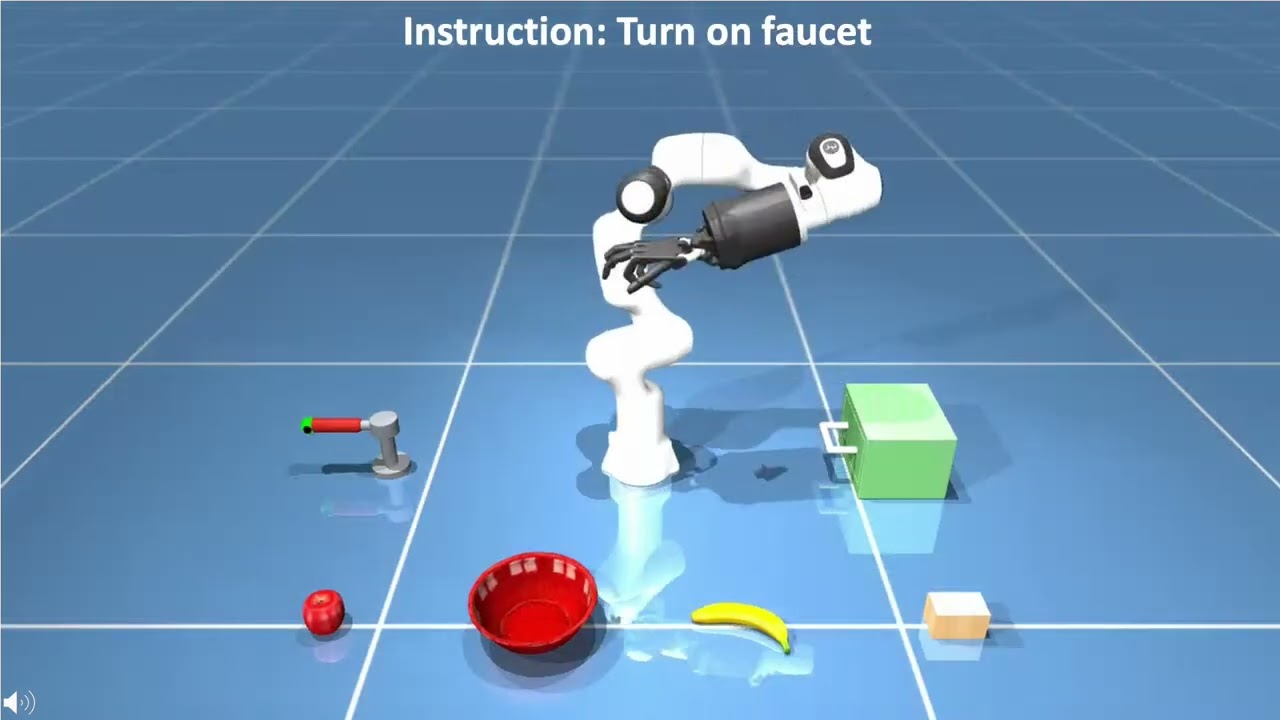

Google DeepMind Scientists Teach Robots New Tricks with a “Language-to-Reward System”

Researchers from Google’s DeepMind have developed a new approach to teaching robots new skills using natural language instructions. They have augmented large language models (LLMs) with reward functions tailored to low-level actions to enable end-users to interactively teach robots novel tasks. The researchers developed a “language-to-reward system” that translates natural language instructions into reward-specifying code and applies it to find optimal low-level robot actions. In testing, the approach succeeded on 90% of experimental tasks. This new approach aims to empower users to teach robots new skills and integrate them into real-world applications.