Vision-Ultrasound Robotic System based on Deep Learning for Gas and Arc Hazard Detection in Manufacturing

Abstract

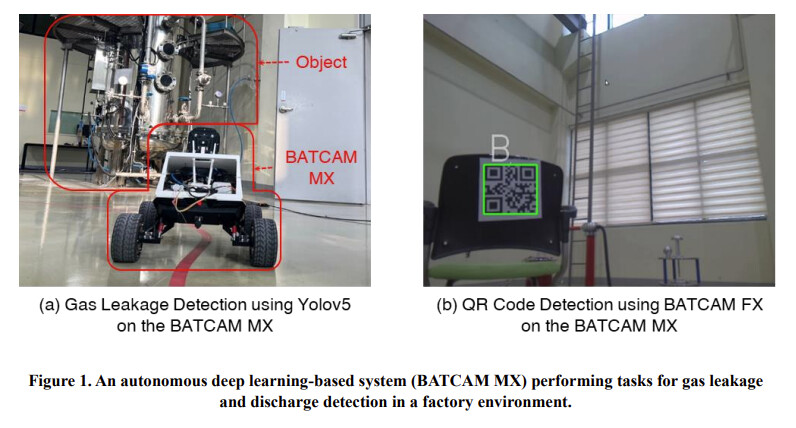

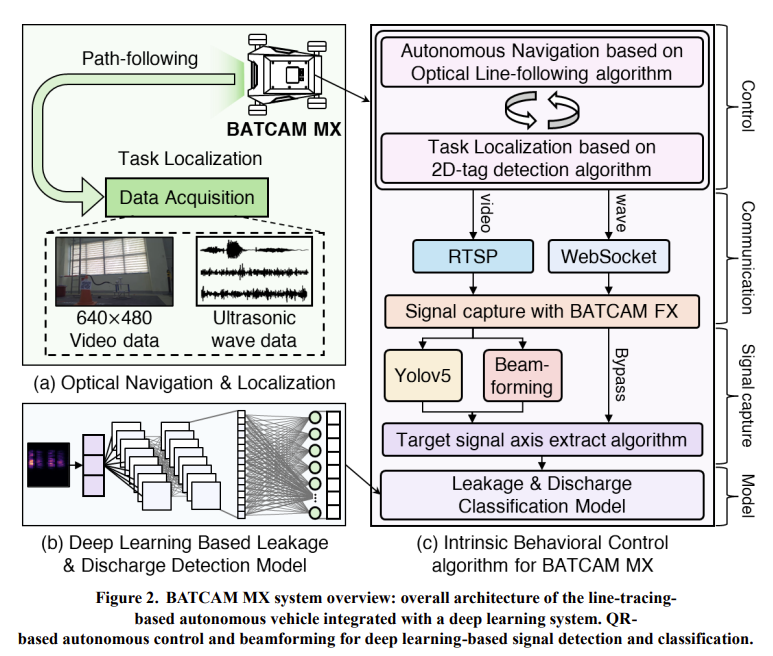

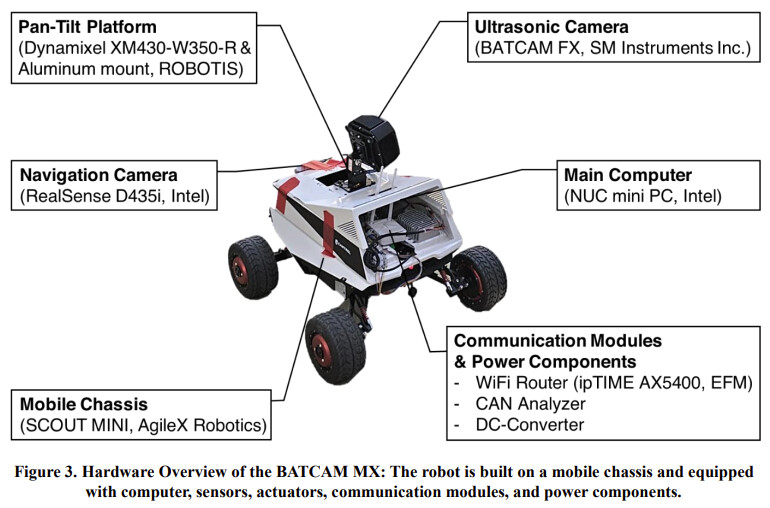

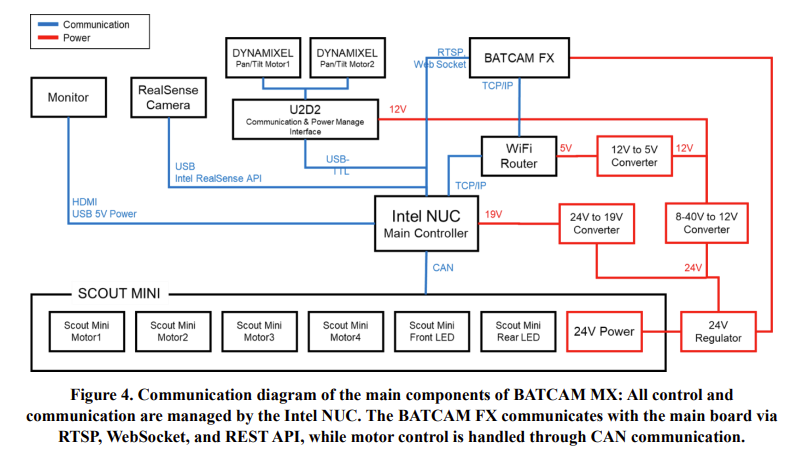

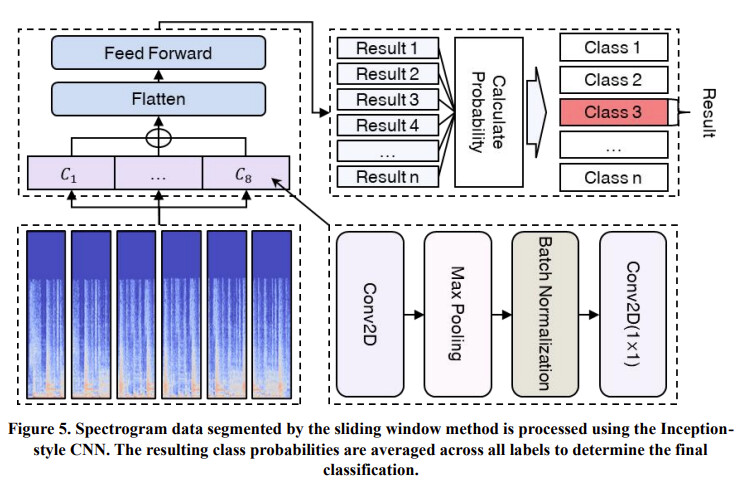

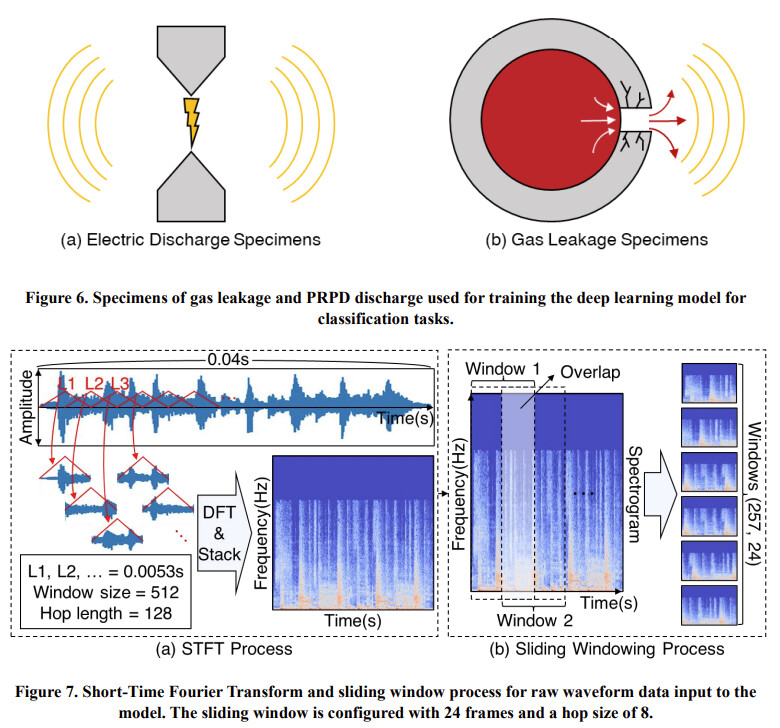

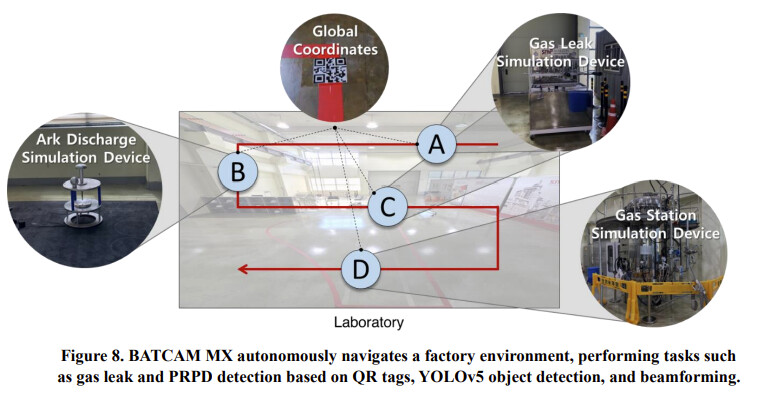

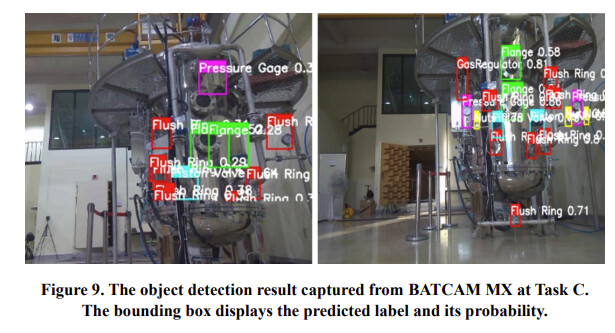

Gas leaks and arc discharges present significant risks in industrial environments, requiring robust detection systems to ensure safety and operational efficiency. Inspired by human protocols that combine visual identification with acoustic verification, this study proposes a deep learning-based robotic system for autonomously detecting and classifying gas leaks and arc discharges in manufacturing settings. The system is designed to execute all experimental tasks (A, B, C, D) entirely onboard the robot without external computation, demonstrating its capability for fully autonomous operation. Utilizing a 112- channel acoustic camera operating at a 96 kHz sampling rate to capture ultrasonic frequencies, the system processes real-world datasets recorded in diverse industrial scenarios. These datasets include multiple gas leak configurations (e.g., pinhole, open end) and partial discharge types (Corona, Surface, Floating) under varying environmental noise conditions. The proposed system integrates YOLOv5 for visual detection and a beamforming-enhanced acoustic analysis pipeline. Signals are transformed using Short-Time Fourier Transform (STFT) and refined through Gamma Correction, enabling robust feature extraction. An Inception-inspired Convolutional Neural Network further classifies hazards, achieving an unprecedented 99% gas leak detection accuracy. The system not only detects individual hazard sources but also enhances classification reliability by fusing multi-modal data from both vision and acoustic sensors. When tested in reverberation and noise-augmented environments, the system outperformed conventional models by up to 44%p, with experimental tasks meticulously designed to ensure fairness and reproducibility. Additionally, the system is optimized for real-time deployment, maintaining an inference time of 2.1 seconds on a mobile robotic platform. By emulating human-like inspection protocols and integrating vision with acoustic modalities, this study presents an effective solution for industrial automation, significantly improving safety and operational reliability.

Keywords: Gas leak detection, Arc discharge classification, Multimodal deep learning, Ultrasonic beamforming, Industrial hazard detection

Powered by DYNAMIXEL

Full Research Paper: [2502.05500] Vision-Ultrasound Robotic System based on Deep Learning for Gas and Arc Hazard Detection in Manufacturing

All Credits Go To: Jin-Hee Lee, Dahyun Nam, Robin Inho Kee, YoungKey Kim, Seok-Jun Buu, Gyeongsang National University, Seoul National University, University of Michigan, and SM Instruments Inc.

ROBOTIS e-Shop: www.robotis.us

DYNAMIXEL Page: www.dynamixel.com

DYNAMIXEL LinkedIn: DYNAMIXEL | LinkedIn